Selecting the right test reporting tool is a critical decision for modern engineering teams.

Allure Report is widely adopted as an open-source solution for its visually appealing, framework-agnostic reports.

However, its core design as a stateless, disposable report generator presents significant challenges, including high maintenance overhead and a lack of persistent historical analytics.

In this guide, we explore 6 of the best Allure Report alternatives worth considering in 2025. We will compare their features for reporting, analytics, and team collaboration.

The goal is to help you find the right tool to improve your testing workflow.

Best Allure Report Alternatives: How to Choose the Right Tool

A modern tool must fit into how teams already work. We assessed how well each platform embeds quality insights directly into the developer workflow.

Key features include role-based dashboards, automated test summaries in Pull Requests, and native integrations with essential tools like Slack, JIRA, and Linear.

This creates a shared, real-time view of quality across the entire team, fostering a culture of shared ownership.

How to Compare Allure Report Alternatives

Here is a quick comparison of top alternatives to Allure Report that can help you identify your preferred test reporting tool:

TestDino |  Allure Report |  ReportPortal |  LambdaTest Test Analytics |  Currents | |

|---|---|---|---|---|---|

| Pricing (starts at) | $49/month | Free | $569/month | $25/month | $49/month |

| Best for | Playwright Reporting & Analytics | HTML reports | Reporting with history and clustering | Test Analytics | Dashboards and orchestration |

| Framework support | Playwright | Playwright & More | Playwright & More | Playwright & More | Playwright & More |

| Ease of use |  |  |  |  |  |

Getting Started | |||||

AI & failure insights | |||||

Test runs & summaries | |||||

Test cases | |||||

Analytics & trends | |||||

Dashboards & UX | |||||

Integrations & alerts | |||||

| Try for free | Learn more | Learn more | Learn more | Learn more | |

Best Allure Report Competitors for Modern Test Automation

Here are the top 7 best alternatives to Allure Report that you can choose from to streamline your test reporting:

1. TestDino

$49

/monthBest for:

Playwright first teams, QA leads, DevOps managers, and engineering teams who want AI-powered QA test reporting and faster debugging.

Platform Type:

Web app dashboard (Playwright native)

Integrations with:

Jira, Linear, Slack, GitHub, GitHub Actions

Key Features:

AI-driven failure categorization (Actual Bug, UI Change, Unstable Test, Miscellaneous)

Flaky test detection with historical trends

Role-Based dashboards (QA, DevOps, managers)

Test Run explorer with logs, screenshots, retries

PR-based insights showing pass/fail next to code

With GitHub integration enabled, TestDino posts AI-generated test run summaries to the relevant commits and pull requests.

Failure error classifications

Instant Slack alerts with test summaries

One-click bug filing into Jira/Linear

Pros

- Built Playwright native, faster setup

- Cuts debugging time with AI insights and automated triage.

- Provides team specific views (QA sees flaky tests, managers see stability metrics).

- Cost-effective compared to enterprise heavy tools with quality test reporting.

Cons

- Currently optimized for Playwright only.

First Hand-Experience

TestDino gives Playwright teams faster insight with AI-driven reporting at a lower operational cost than traditional platforms. It ingests standard Playwright outputs, classifies failures with confidence scores, and maps every run to its branch, environment, and pull request.

The result is a clear, centralized view of quality that turns noisy failures into priorities your team can act on immediately. Because it is Playwright-native and integrates directly into CI, setup takes minutes, not days.

Teams get one source of truth for runs, traces, screenshots, videos, and logs, plus role-based dashboards that keep QA, developers, and managers aligned on what blocks release and what can wait.

Smart Reporting & Debugging

TestDino goes beyond pass or fail. AI groups similar errors, labels each failure as Actual Bug, UI Change, Unstable Test, or Misc, and highlights persistent versus emerging issues with confidence scores.

That context explains why tests failed and where to start, collapsing triage from hours to minutes. The Test Runs view adds status, branch, environment, and AI tags to each execution.

Open a run to see Summary, Specs, History, Configuration, and AI Insights. Evidence is one click away: error text, step timeline, screenshots, and console per attempt or retry. Developers get PR-aware feedback that separates flakes from real blockers, so fixes land faster.

CI/CD Speed & Test Coverage

Built for modern pipelines, TestDino plugs into your CI to upload Playwright reports after execution. It supports parallel runs and exposes timing intelligence so you can identify slow specs, branches, or days without adding framework overhead.

Analytics quantifies average and fastest run times, time saved, speed drift by day, and distribution of fast versus slow runs. Coverage and stability are visible at every level. The Test Case view surfaces slow tests and pass/fail history.

Environment analytics compare success rates and volumes across mapped environments and operating systems, making it obvious whether a slowdown is code, data, or infrastructure. Combined with flaky detection and retry analysis, teams shorten feedback loops without re-running entire suites.

Team and Client Collaboration

Role-based dashboards keep each stakeholder focused. The QA dashboard flags flaky clusters and failure categories. The Developer dashboard focuses on PR health, active blockers, and branch stability. The Manager dashboard rolls up trend metrics for release readiness and risk.

Everyone sees the same source of truth, filtered to what they need. Integrations remove copy-paste from communication. Raise Jira or Linear issues prefilled with evidence and history.

Send compact run summaries to Slack with direct links to proof. For distributed teams and client reviews, TestDino's PR view shows full run and retry history with passed, failed, flaky, and skipped counts, so decisions are made with context and audits are straightforward.

Pricing & Value

Four distinct plans are available on TestDino; each specifically created to meet the demands of its consumers.

Final Verdict

TestDino is a strong choice among Allure Report alternatives due to affordable pricing, faster onboarding, and Playwright native support.

It delivers AI-driven debugging, flaky test detection, and confidence-scored insights that shorten triage time and improve reliability at scale. Role-based dashboards, PR-aware feedback, and persistent history make failure context clear and actionable.

Compared with Allure Report, TestDino provides deeper Playwright integration, in-depth analytics across runs, cases, and environments, and CI/CD optimization without added framework overhead.

The lightweight setup, direct PR mapping, and Slack/Jira/Linear integrations enable QA teams, developers, and managers to collaborate on one source of truth. If you are evaluating Allure Report alternatives, TestDino offers a practical, cost-efficient platform that prioritizes speed, clarity, and measurable quality gains.

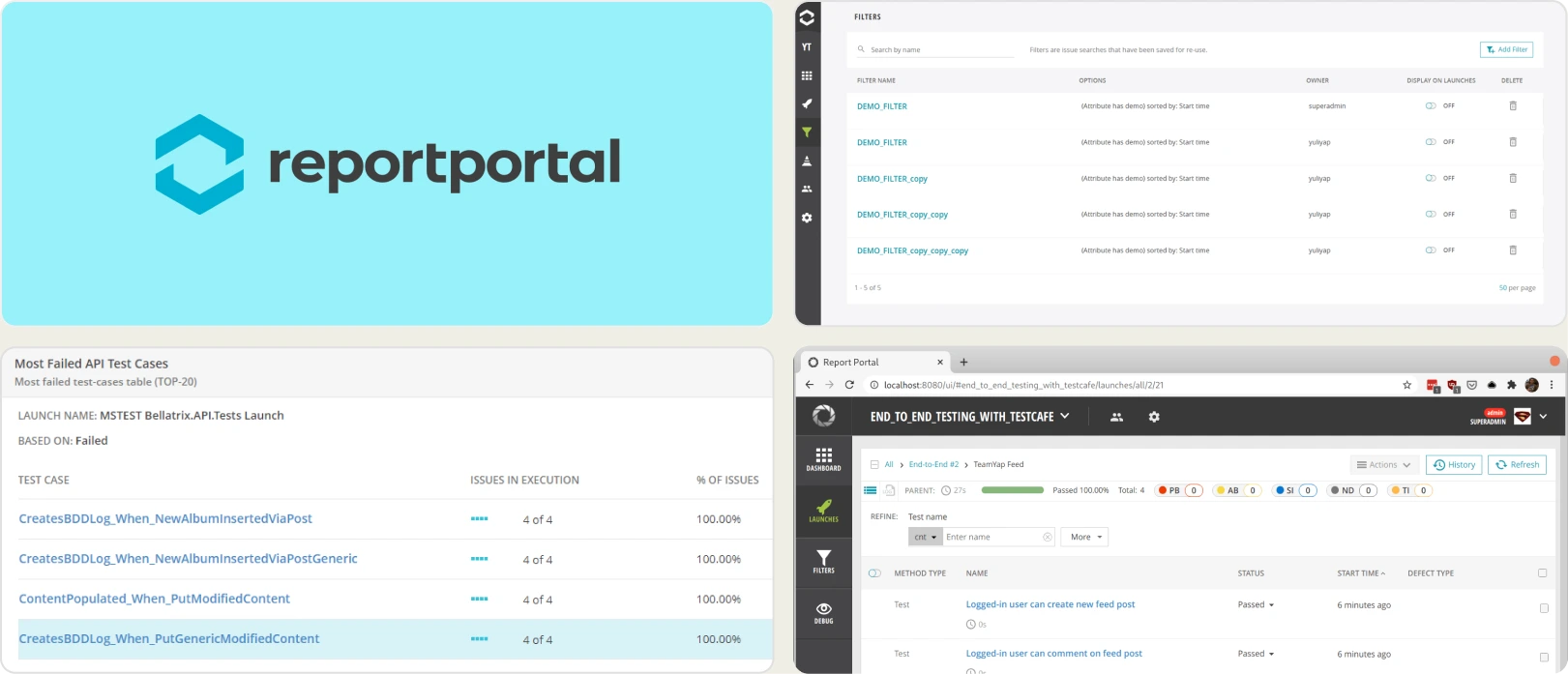

2. ReportPortal

Best for:

QA teams looking for open source flexibility with basic test analytics.

Platform Type:

Web dashboard platform

Integrations with:

Jenkins, GitHub, GitLab, Jira, Slack, etc.

Key Features:

Open source test reporting

Real-time launch/run reporting

Failure clustering and auto-analysis

Flaky test detection via history

Custom dashboards, widgets, AQL filtering

REST API and export options

Pros

- Free open source core

- Broad framework and CI/CD coverage

- Flexible dashboards and filters

- Community and enterprise support options

Cons

- Limited AI-driven insights out of the box

- Requires hosting, setup, and ongoing maintenance

- UI and UX feel less modern than newer tools

- PR-focused analytics require additional wiring

First Hand-Experience

ReportPortal provides transparency and extensibility typical of open source, plus useful auto-analysis for grouping failures. In practice, teams often allocate ongoing developer time for upgrades, scaling, and fine-tuning dashboards.

The interface is functional, though it may feel dated for stakeholders who expect polished, role-specific views.

Pricing & Value

The open source tier is attractive for cost control, but total cost of ownership includes servers, observability, backups, and engineering effort.

Managed SaaS plans reduce operational burden yet move pricing into an enterprise bracket.

For buyers researching ReportPortal competitors and ReportPortal reviews, value hinges on whether your team prefers do-it-yourself flexibility or a turnkey experience with faster insight delivery.

Final Verdict

ReportPortal is a solid option for organizations that prioritize open source, need multi-framework aggregation, and can invest in maintenance.

Teams exploring test reporting and automation analytics within the broader landscape of ReportPortal Alternatives may also consider how important quick onboarding, PR-aware insights, and low-overhead operations are to their roadmap.

If speed to value and minimal upkeep are priorities, shortlist accordingly.

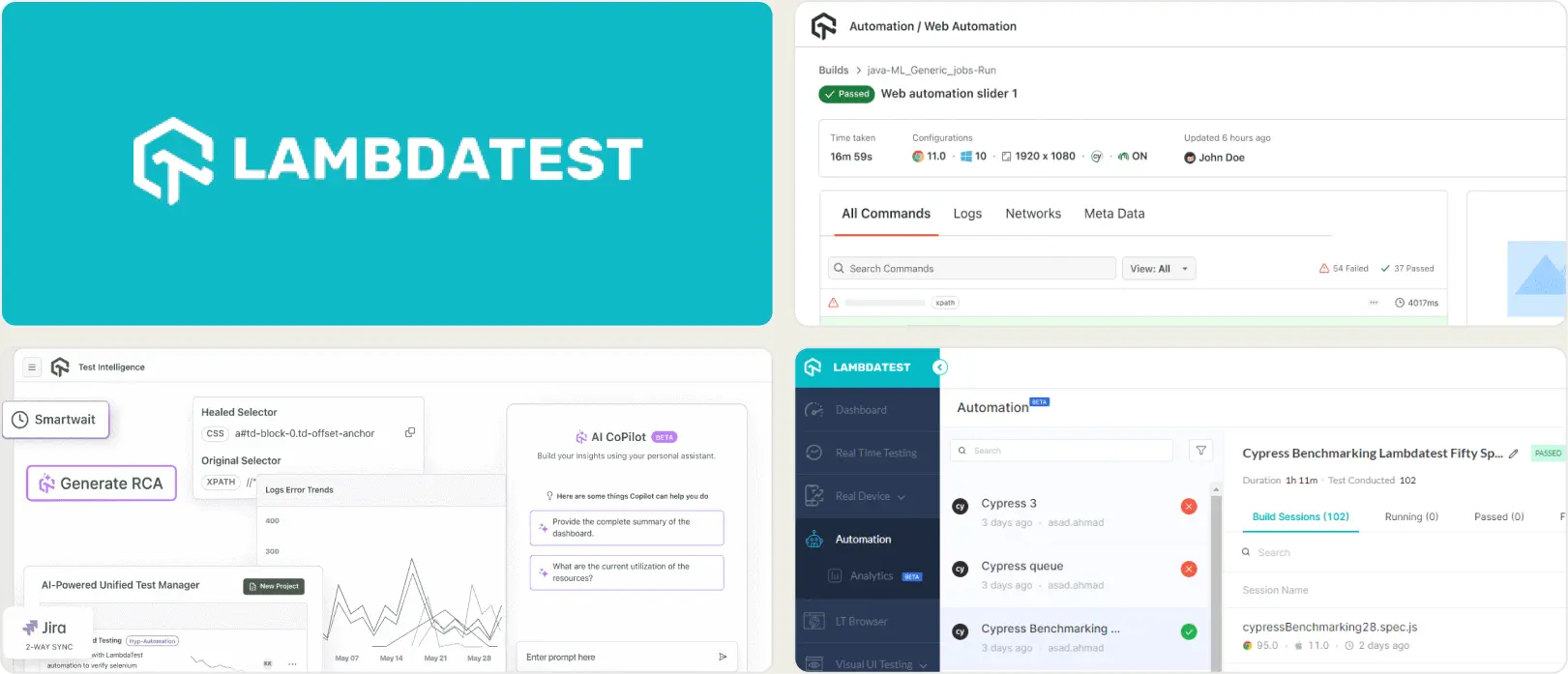

3. LambdaTest Test Analytics

Best for:

Teams needing cross browser cloud testing with parallel execution.

Platform Type:

Cloud dashboard platform

Integrations with:

Jira, Trello, CI/CD pipelines

Key Features:

Cross-browser and real-device coverage

Cloud automation grid with parallelism

Screenshots, video, and logs

Basic test execution insights

CI/CD triggers and reporting hooks

Pros

- Affordable entry pricing

- Wide browser and device matrix

- Good for functional and visual checks

- Quick cloud onboarding

Cons

- Reporting secondary to execution

- Limited advanced test analytics

- Playwright-native reporting is basic

- Deeper insights often require add-ons

First Hand-Experience

LambdaTest Test Analytics delivers dependable cloud execution across browsers and devices, which helps teams expand coverage quickly. The dashboard surfaces runs, artifacts, and essential telemetry without heavy setup.

Over longer horizons, teams seeking granular test analytics, flaky detection depth, or role-specific insights may feel constrained by reporting that emphasizes execution over analysis.

Pricing & Value

Entry-level plans are cost-effective for pilots and smaller suites. As concurrency, minutes, and device usage increase, higher tiers are typically required for throughput and retention.

Buyers researching LambdaTest Test Analytics Alternatives, LambdaTest Test Analytics Reviews, and broader LambdaTest Test Analytics Alternatives should model expected parallel sessions and artifact storage to project total cost.

Final Verdict

LambdaTest Test Analytics is an affordable, flexible option for cross-browser and device execution with straightforward cloud operations.

For leaders evaluating LambdaTest Test Analytics competitors in the context of test analytics and Playwright automation, consider whether long-term priorities include advanced debugging signals, historical stability views, and role-aware reporting, in addition to scalable execution.

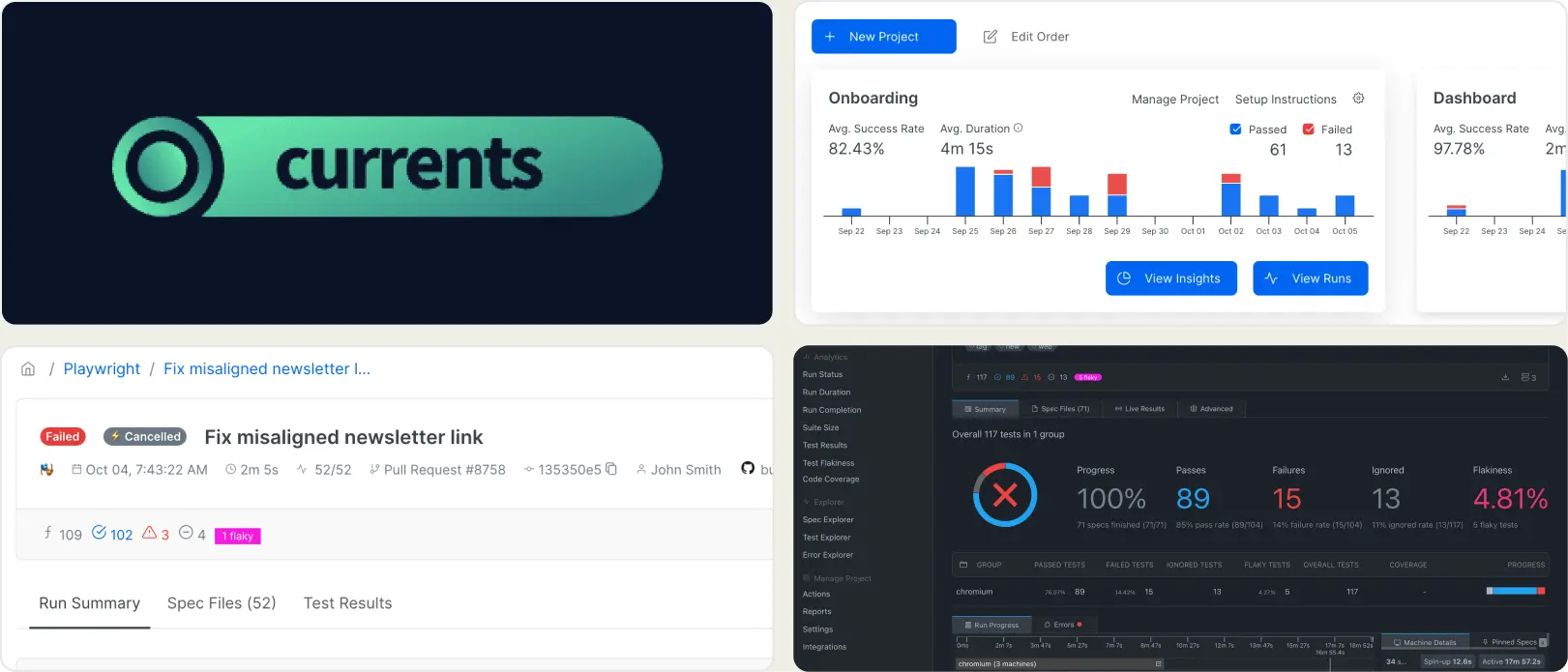

4. Currents

Best for:

Teams that want to live stream Playwright test runs in the cloud.

Platform Type:

Cloud dashboard platform

Integrations with:

GitHub, GitLab, Slack

Key Features:

Live test run streaming

Orchestration for sharding and parallelism

CI/CD pipeline integrations

Basic analytics: status, duration, spec-level failures

Centralized logs, screenshots, and videos

Pros

- Real-time visibility during execution

- Simple cloud-first setup

- Native alignment with Playwright workflows

Cons

- Limited analytics depth

- Usage costs can scale quickly

- Lacks advanced debugging and AI insights

- No dedicated PR-focused views

First Hand-Experience

Currents delivers strong live streaming for Playwright runs, which is useful during active releases and incident response. In day-to-day use, the focus stays on execution monitoring.

Teams that require failure categorization, predictive patterns, or role-specific dashboards may find themselves stitching together additional tooling to close insight gaps.

Pricing & Value

Usage-based pricing lowers the barrier to start, which is attractive for pilots and short-term initiatives. As test volume grows, ongoing costs can rise in lockstep with run frequency and artifacts, so budget planning should account for sustained CI activity and parallelism.

Final Verdict

Currents is a good fit for organizations prioritizing CI/CD integration and real-time test reporting.

Buyers researching Currents competitors and reading Currents reviews should assess how important advanced analytics, AI-driven debugging, and PR-aware insights are to their roadmap.

If long-term efficiency and deeper analysis matter, shortlist platforms that provide richer diagnostics in addition to live streaming.

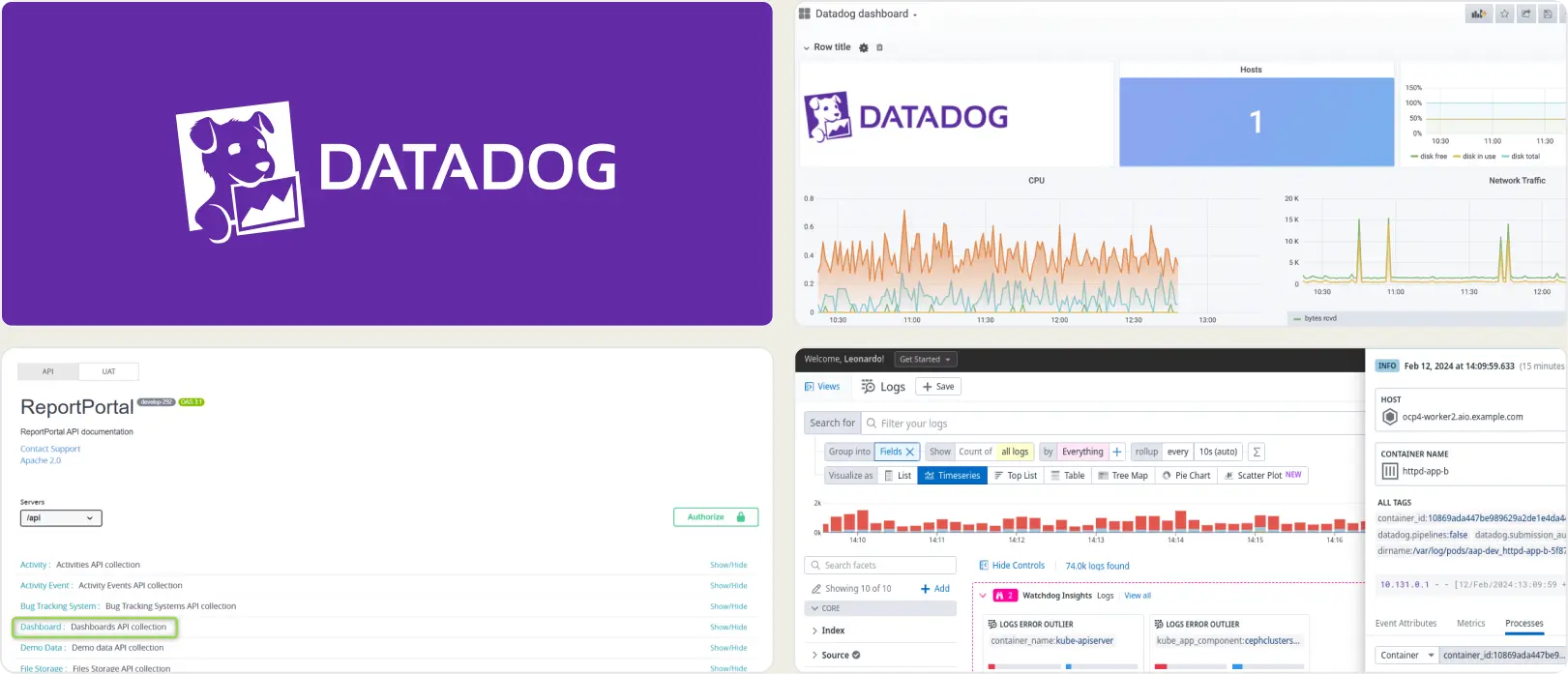

5. DataDog Test Optimization

Best for:

Organizations already use Datadog for observability and want test monitoring add ons.

Platform Type:

Cloud dashboard platform

Integrations with:

CI/CD, Slack, Jira

Key Features:

End-to-end observability across logs, metrics, traces, and tests

Synthetic browser and API testing

Custom dashboards and widgets

Alerting and incident workflows

Correlation between test results and backend signals

Pros

- Strong, mature observability suite

- Efficient for teams already using DataDog

- Rich ecosystem and integrations

- Scales to large, distributed systems

Cons

- Cost can rise quickly with test volume and data retention

- Not specialized for deep test analytics and triage

- Steeper learning curve for QA-focused users

First Hand-Experience

DataDog extends familiar observability practices into test monitoring, which benefits teams already operating within its ecosystem.

The breadth is significant, although day-to-day test analysis may require navigation across multiple modules and custom dashboards.

QA-led groups seeking streamlined triage may find the experience broad rather than purpose-built.

Pricing & Value

The usage-based model aligns spend with data ingestion and retention, but costs can be difficult to forecast as logs, traces, and test artifacts scale.

For buyers researching DataDog Alternatives, the value is highest when unified observability is a core requirement and test data must live beside infrastructure telemetry.

Final Verdict

DataDog is a strong option for enterprises that want test observability embedded in a full-stack monitoring platform.

Teams reviewing DataDog competitors and reading DataDog reviews should consider whether they need a general observability layer or a specialized test reporting tool with focused debugging features.

If predictable costs and streamlined QA analytics are priorities, include dedicated DataDog Test Optimization alternatives in your shortlist.

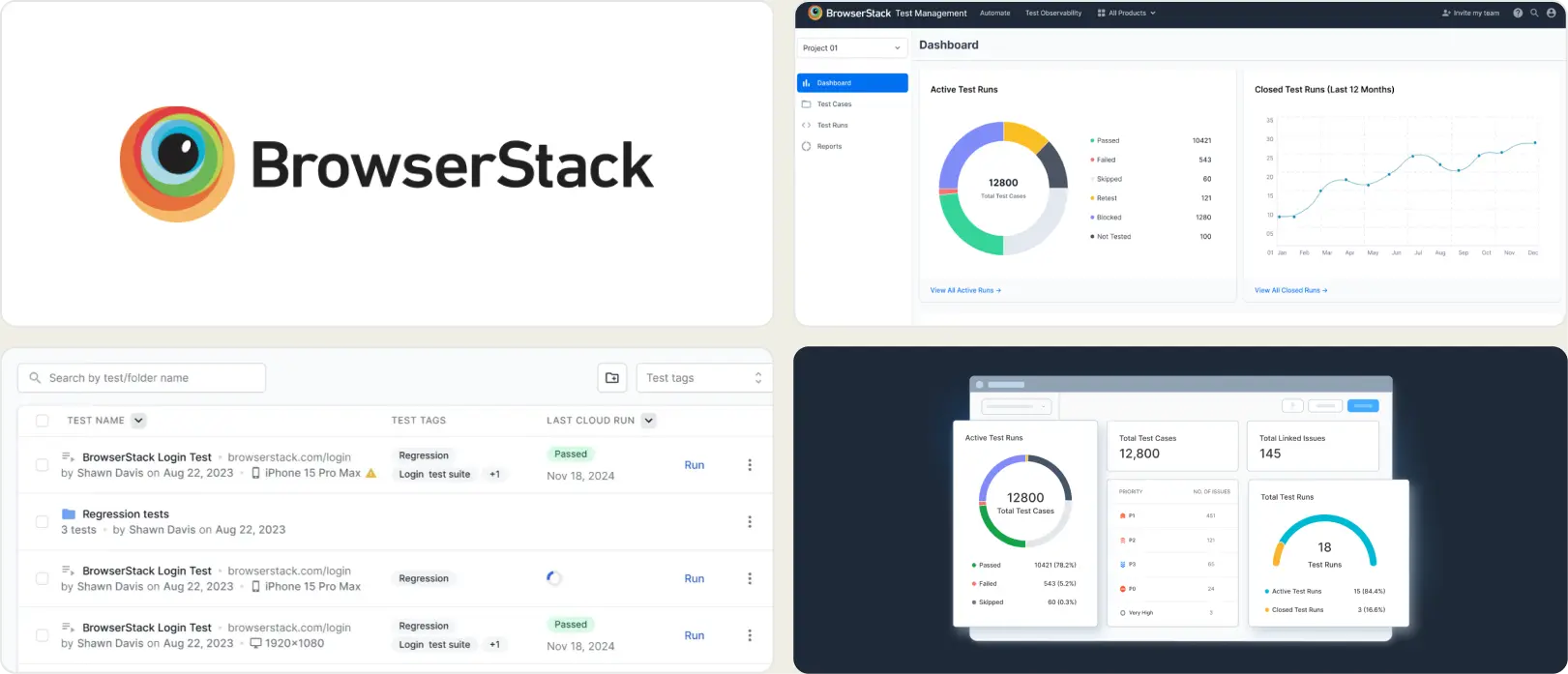

6. BrowserStack Test Reporting

Best for:

Teams are already using BrowserStack for cross-browser testing.

Platform Type:

Cloud dashboard platform

Integrations with:

Jira, CI/CD tools

Key Features:

Test execution reports

Cross-browser insights

Screenshots and video recording

Centralized dashboard for runs

Basic trends and error grouping

Pros

- Seamless if on BrowserStack

- Easy cloud onboarding

- Works well for cross-browser runs

Cons

- Limited analytics depth

- Execution-centric, not analysis-centric

- Less tailored for Playwright debugging

First Hand-Experience

BrowserStack Test Reporting handles execution visibility across browsers and devices reliably. Logs, screenshots, and videos are easy to access, which helps during active triage.

Over time, teams that rely on historical signals, role-specific views, or granular root-cause patterns may find the reporting layer relatively basic for long-term optimization.

Pricing & Value

Reporting is bundled, which simplifies procurement. Pricing scales with browser minutes and test volume, so costs can rise as automation and parallelism increase.

Teams comparing BrowserStack Test Reporting Alternatives should factor ongoing usage patterns, retention needs, and the depth of Playwright reporting required.

Final Verdict

A solid choice for organizations prioritizing cloud execution and quick visibility across devices.

For buyers researching BrowserStack Test Reporting competitors, BrowserStack Test Reporting alternatives, and reading BrowserStack Test Reporting reviews, evaluate whether your roadmap emphasizes execution coverage or advanced test analytics and debugging depth.

If long-term insight and scalability of analysis are key, shortlist platforms designed for deeper diagnostics in addition to cross-browser runs.

How to Select an Alternative to Allure Report

Choosing the right alternative means moving from a simple artifact generator to an intelligent analytics platform.

Your decision should be guided by your team's need for speed, collaboration, and data-driven insights.

Smart Reporting and Debugging

A modern alternative must go beyond pass/fail logs. Look for features like AI-powered failure categorization, flaky test detection, and root cause analysis.

Allure Report requires manual, rule-based classification, whereas intelligent platforms automatically surface critical bugs, saving your team hours of triage.

Team Collaboration

Effective engineering requires a single source of truth. Allure Report lacks any user management or real-time collaboration features.

Select a centralized platform that offers role-based dashboards, instant notifications via Slack, and direct integrations with ticketing systems like Jira to keep developers, QA, and managers aligned.

Analytics and Test Coverage

Data is useless if it disappears after each run. Allure Report's core weakness is its stateless, "disposable" nature, which prevents long-term trend analysis.

A superior alternative must provide data storage, allowing you to track test stability, failure trends, and performance over weeks and months to make informed decisions about quality.

CI/CD Speed and Integration

Your reporting tool should simplify your pipeline, not complicate it.

Allure Report is known for its high operational overhead, especially when trying to maintain a history of test runs.

Look for a tool that offers a simple, one-step CI/CD integration and does not require complex scripting or artifact management.

Ease of Use & Support

A powerful tool should not require a steep learning curve. Allure Report's setup can be challenging for new users.

Prioritize platforms that offer quick onboarding, an intuitive user interface, and responsive customer support to ensure your team can get value from day one.

Wrapping Up

While Allure Report is a capable tool for visualizing a single test run, modern engineering teams require more. They need an intelligent and low-maintenance platform that provides actionable insights, not just disposable artifacts.

The move away from stateless generators is a move toward proactive quality management. Modern tools like TestDino deliver the speed, intelligence, and collaboration that teams need to ship with confidence.

By providing a centralized platform with AI-powered analytics and a simple CI setup, they solve the core challenges that make teams look for an alternative to Allure Report in the first place.

Ready to see the difference a true analytics platform can make? Start your free trial today.

Move past static HTML reports

FAQs

Allure Report is great for single-run HTML snapshots, but it is stateless. A hosted analytics platform stores history, shows trends, and gives searchable evidence so teams track stability and spot regressions over time.

Related Alternatives

Looking for more options? Browse related alternative tools that might fit your workflow better.